Designing the Shift

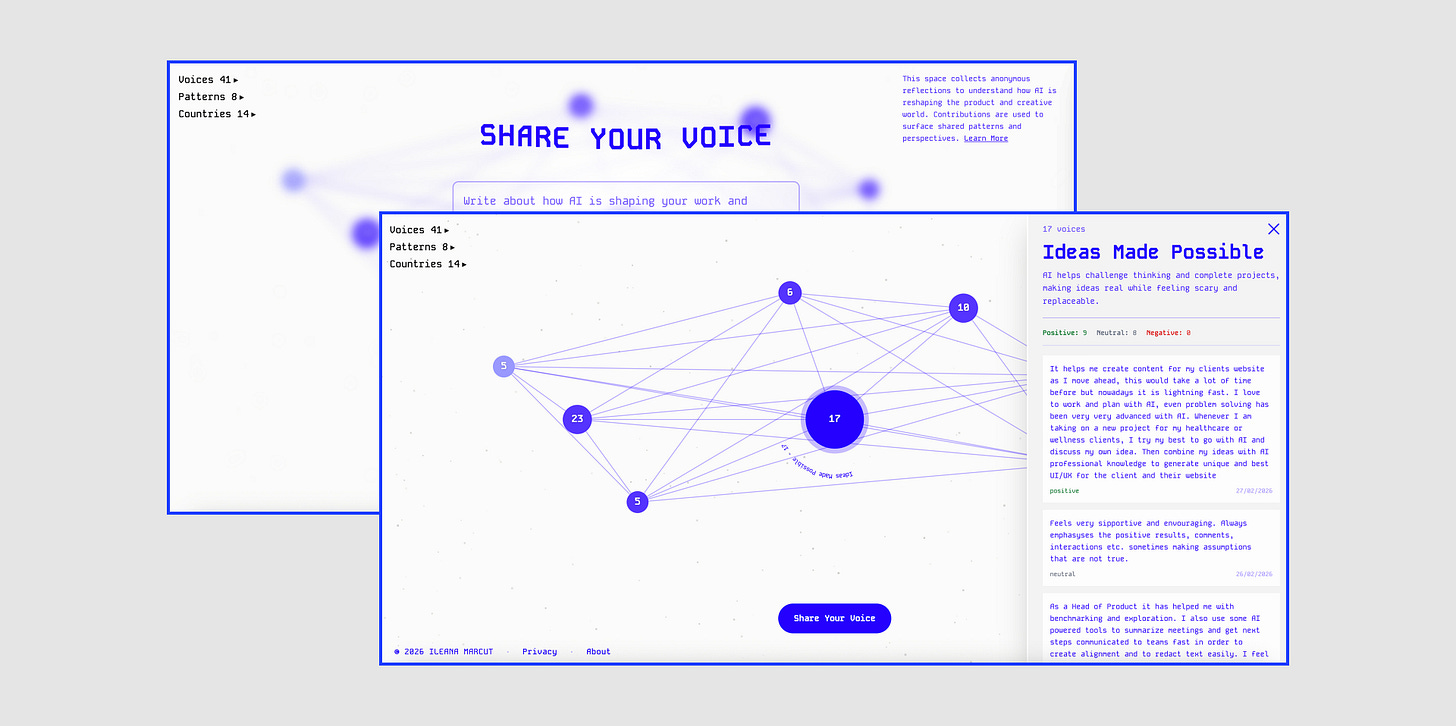

The story behind a system I built with Claude Code to collect anonymous reflections about how AI is changing who we are. How it works, where it broke, and what 40+ voices from 14 countries revealed.

Where This Started

Everyone is talking about AI. We see and hear about it everywhere, opinions, trends, marketing messaging from AI tools, hot takes from influencers. People share what they build, what they’d automated, how they’ll make 1 million dollars with their vibe coded app.

I felt drained from my social media bubble, so I thought: is this what the entire world does? And how do people really feel?

When I talk with friends and the people around me, the tone changes. They share a quiet pressure to adapt faster than they were ready for. Or how it touched their identity, confidence, and the sense of where they belong. And some share excitement and energy from new opportunities.

Those feelings rarely make it into public conversations. I wanted to strip away all the marketing, the influencing, the pretending, and create a space where people could just say what they actually feel. Screaming into the void, take it off their chest, and let others see it, and maybe recognise themselves in it.

That thought was the spark of my exploration:

Can we share how we really feel if it’s anonymous?

Do we care what someone has to say if we don’t know who they?

Can we simply listen and observe without processing, filtering?

As I was defining how this space takes form, I explored how can AI help:

Can it help surface patterns in raw, unfiltered human expression?

Where does it work? Where does it fail?

And this is how Designing the Shift was born.

What Is Designing the Shift 💙

Designing the Shift is a public experimental space for collecting reflections about lived experiences and feelings about the way AI changes how we work and who we become.

It addresses people working in product, design, creative, and adjacent roles: designers, developers, product managers, researchers, artists, writers, strategists, and makers.

You visit the space, write a short reflection in your own words, and submit it. There are no accounts, no profiles, no requirements beyond writing a message (or more!).

Contributions are anonymous, and the system stores the text you submit. Each submission becomes part of a shared archive. The system uses AI analysis to identify recurring themes and emerging patterns over time.

So far, 40+ people from 14 countries have submitted reflections, and the system has surfaced 8 patterns.

Not Art, Not Research, Not Tech. All Three.

I shared the raw concept to 5 people to see their initial impression.

All of them, without me mentioning, call it an art/tech project.

The experience of encountering it feels closer to an exhibition than a study.

Anonymous voices, unprocessed, presented without additional framing.

There are no categories to select and no persona descriptions. The reflections aren’t processed into findings. They’re surfaced collectively as patterns, but the raw voice stays intact.

That’s deliberate. I wanted to see what happens when you remove every layer of performance and just let people speak. The contradictions, the ambiguity, the things that don’t fit neatly into a box. The voices are meant to be listened to, not processed.

This Project Was Not Done in a Day! 🙀

Even if the UI looks simple, it took me around 3-4 days just to write all the documentation and context the AI needed before a single line of code was generated.

By the way, Claude says he noted “roughly 4 weeks of active development” in Github.

My Stack:

The Design & Build Process:

Writing and documenting all my ideas in text

Research, exploration, ideation with AI

Sketch ideas in Figma: the nodes concept, colours and fonts

Node exploration in Figma Make, type of nodes and library tests

Documentation, text content, note ideas in Notion

Implementation, iteration, testing with Claude Code

Publish the code to GitHub

The System Behind

The system has two main parts: a set of general rules that protect contributors and define how the space works, and an AI processing layer that reads, analyses, and surfaces what people share.

The AI processing layer runs on OpenAI’s GPT-5 Nano. Each step in the pipeline has its own dedicated system prompt with specific instructions for that task.

When someone submits a reflection, the AI layer takes over:

Redaction. The system scans for sensitive data. Anything that could trace back to a person gets removed or replaced before the text goes any further.

Safety. A separate prompt checks for safety compliance. Most submissions pass automatically. Edge cases get flagged for human review.

Classification. The text is matched against an evolving taxonomy of patterns. If an existing pattern fits, the voice is assigned to it. If it doesn't fit anything, the system can propose a new one.

Labelling. Pattern names are generated to be neutral and descriptive, based on the actual words people contributed. When you explore a pattern, you see the voices that shaped it. Nothing is abstracted away from what was actually said.

How a Single Label Biased the Entire System

The first real submissions started coming in. I watched the system process them, curious to see what it would do with real voices instead of test data.

I immediately knew something was off.

One of the pattern descriptions read: “Views AI as a neutral tool whose value depends on the designer’s skill and intent.” Another: “Feeling one’s designer identity and value are undermined.” A category was labeled “Designer Identity Threat.”

But the system doesn’t know people’s roles. Submissions are anonymous. Someone who writes “I wonder if designers will replace PMs” could be a PM themselves. The system was confidently assigning designer framing to every voice that came in, regardless of who was speaking.

When I dug into it, I found the issue: the entire system was biased!

The classification prompt said “collecting designer reflections.” The root node of the taxonomy was labeled “Broad area of designer experience with AI.” The sentiment guidance measured “sentiment toward AI in design.”

I never specified any of that. I defined what the system should do at a high level, and I relied on Claude Code to implement the system based on the documentation I provided.

But it refined the prompts, the labels, and the processing logic.

And it simply assumed everyone using the system would be a designer, like me!

The bias wasn’t in one place. It was layered through the entire processing pipeline: the system prompt, the root node, the sentiment guidance, the category labels, the description generator. Each layer reinforced the assumption from the layer before it.

The lesson:

The parts you leave open are the parts the AI fills in with its own assumptions.

And those assumptions can look perfectly reasonable until they meet real data.

The fix required changes across the entire pipeline.

But the lesson is bigger than the fix.

Current State: 40 voices, 14 countries, 8 patterns

I didn’t know what to expect when I started this.

I had a simple goal: collect 100 voices.

Curiosity was the fuel. I knew things wouldn’t work perfectly. But I was genuinely surprised, not by the imperfections, but by where they showed up.

I had no expectations about what people would say or what patterns would form. That’s the beauty of the experiment: the surprise, and the openness of what it can evolve in.

The system surfaced these patterns organically from what people chose to share:

Daily AI Dependence (23 voices)

AI is used daily across creative work, enabling new projects but feeling overwhelming and uneven to adopt.

Ideas Made Possible (17 voices)

AI helps challenge thinking and complete projects, making ideas real while feeling scary and replaceable.

Shifting Job Roles (10 voices)

Unclear job changes as AI pushes output-first work and blurs lines between designers and PMs.

Intent and Responsibility (7 voices)

AI blurs human-made and AI-made, making intention and responsibility feel more important than ever.

Fear of Replacement (6 voices)

Using AI feels empowering but also sparks fear of being too slow and replaced at work.

No Clear AI Process (5 voices)

AI use lacks clear process and direction, forcing navigation between AI and non-AI workflows.

Collective Shift Vision (4 voices)

AI is framed as a liberator that shifts society toward collaboration, community, and less screen work.

AI Human Blur (3 voices)

Fear of not telling AI from human work, raising new questions about intention and responsibility.

The system is designed to evolve as more people contribute. New voices will shift existing patterns, form new ones, and retire others. What you see today is a snapshot. What it becomes depends on who shows up next.

Closing Thoughts 💭

Building Designing the Shift reminded me that the role of designers is quietly expanding. From the outside, the project looks simple. A page where people submit reflections and explore emerging patterns.

But most of the work was not in the interface. The real design challenge lived in the system behind it. The prompts, the rules, the processing logic, the safeguards, and the interpretation layers that decide how human voices are read and organized.

Designing those systems requires a different kind of thinking.

If you explore Designing the Shift, treat it less like a report and more like a living archive of a moment in time. Let the voices be what they are; notice how do they make you feel.

Thanks for reading! 🫶

A huge thank you to all the people who supported me in this project, gave feedback early on, shared voices, and helped shape what this became! 💙 Andrei Marcut, Dana Vetan, John Vetan, Karo (Product with Attitude), Vadym Grin, Jenny Ouyang, Elena | AI Product Leader + everyone who shared their voices!

Paid subscriber benefits!

/ the good stuff: prompts, UX + AI frameworks, behind-the-scenes experiments, and workshops where we build things with AI.

For March only, get 50% off the yearly plan! 🎁🌸

Subscribe Now

👉 Upcoming workshop! Details here!

More UX + AI Reads 👇🏽

More Reads to Explore

How to Onboard to Claude Without the Learning Curve - by Jenny Ouyang

Let’s Collaborate 💫

Open to Substack collaborations. I’m one DM away!

I design and build digital products + AI solutions. Let’s chat!

1:1 AI + Design deep dives for creatives, designers, and product people. Book here!

Are you sharing any of this architecture with subscribers? I really like the project. X

I completely missed this going by! Is this what you had posted about in your Note awhile back?

What an incredible project from top to bottom! The app is beautiful. The way those words start to fade off the page and collect in the back. The topic titles slowly revolving around the node.

These are the design details that come from an expert! I could never have created it even if I worked with AI because I don’t have the experience that has played around with these ideas.

The insights from your app are incredible too! They make me miss being a researcher where we would create these structured systems to understand what people were thinking and feeling about.

Thank you for sharing this with us! This is so cool!