From idea to prototype with AI

How I co-built a new feature using ChatGPT and Gemini.

Hi! 👋 I’m Ileana, a Product Strategist and UX Designer focused on designing AI-powered experiences and exploring how AI can improve the way I work.

In this post, I'll walk you through one of my experiments in using AI tools, from idea to a clickable prototype. Also, this process was used on a real project.

I’m sharing what worked, what didn’t, and how it felt to co-create with AI.

Hopefully, it inspires or helps you see how AI could fit into your design process.

The challenge

I needed to add a new feature to one of the products I’m currently working on.

Normally, I’d go through my usual process:

Alignment workshop

Defining requirements

Designing flows

Sketching wireframes

Iterate based on feedback

Figma prototype

Iterate based on feedback

Development handover

And so on…

But this time, I wanted to do it differently. I really wanted to use AI end-to-end, not just for small tasks, but throughout the entire process. No Figma. No traditional tools. Just AI. My goal was to go beyond unfinished experiments and apply this to a real project, from start to finish.

I pitched the idea and got the green light.

Getting started

We knew what was needed, so I ran a quick workshop with the product and engineering teams to define the final requirements.

The scope was to add a new feature to an existing product. We defined the goal, the logic behind the feature, and discussed the technical constraints to get us started.

I used Miro to capture our ideas, notes, and quickly sketched a simple user scenario to define the main steps and flow.

Here’s what happened next.

From notes to a clickable prototype in minutes

I asked ChatGPT to turn the notes into a requirements document for the new feature. I shared the following context with the prompt:

Structured notes describing the feature

Print screen with the user scenario sketch

Context about the product and its audience

Previous ChatGPT research on the topic

Creating a requirements document with ChatGPT

ChatGPT generated a well-structured, surprisingly solid document.

Of course, it wasn’t perfect. It added extra functionality, changed the order of a few steps, and added more complexity. After three rounds of feedback, the document was ready to be shared and reviewed.

The team reviewed it and added just a couple of comments, and that was it.

Here’s the prompt I used to generate the requirements document:

“Based on your knowledge and initial research on this topic, help me define a feature specification document for this scope. The goal is to capture everything needed to move from idea to design and development implementation. The output should be structured, including the goal of the feature, a step-by-step user flow, and specifications and requirements.”

Creating a clickable prototype with AI

Once the specs were approved and the logic had been reviewed by both tech and product, it was time to bring the feature to life.

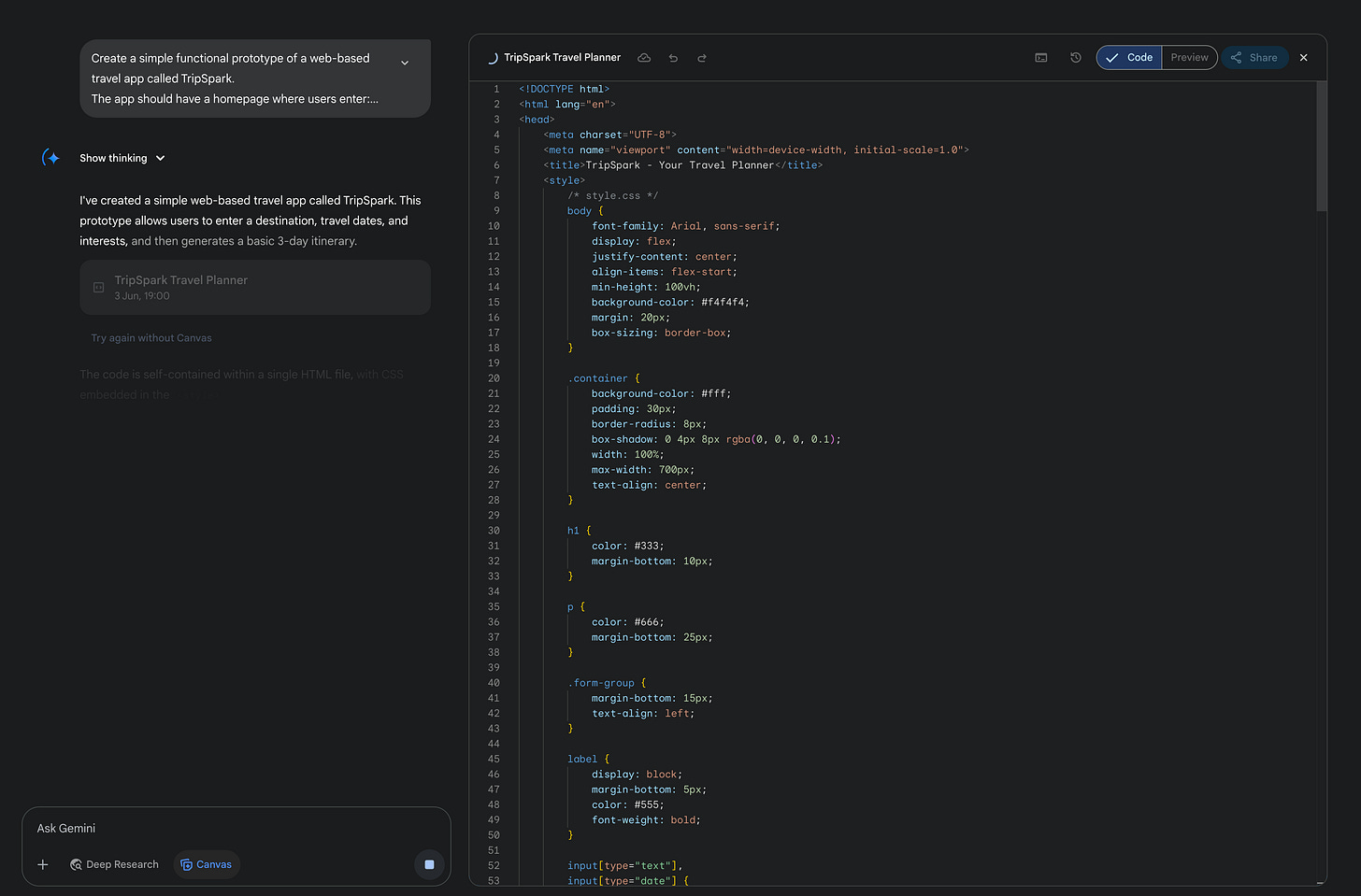

Usually, I’d start sketching in Miro or move to Figma to create the prototype. This time, I asked ChatGPT to generate a working prototype straight from the requirements.

And guess what. It didn’t work. 🫠

The preview canvas inside ChatGPT was broken; it wouldn’t render the UI.

So I copied the code and pasted it into Gemini Canvas instead. I already had a subscription, and it seemed like the fastest option. Claude could have worked too.

And it worked!

I had a fully clickable prototype in seconds! 🚀

If ChatGPT’s canvas preview had worked, I wouldn’t have had to switch tools.

Live feedback and real-time updates

I booked a call with the team to share the prototype.

Everyone was impressed. We walked through the flow together, and I prompted updates live in Gemini as they provided feedback. It was incredibly efficient and fun to co-create in real-time.

Then I took it a step further.

I recorded the meeting, pulled the transcript, and fed it back into Gemini, asking it to update the prototype based on the whole conversation.

And it did.

That moment felt super satisfying.

But… can we use this code?

Now that I had a working prototype in code, I started asking myself:

Can we implement this as-is?

Can I hand this to the developer?

Can we make it fit with our design system and standards?

Should I move it to Figma?

I synced with the devs to check.

The answer was clear. The code wasn’t usable out of the box.

It was great for prototyping and discussion, but it didn’t follow our design system, use tokens, or match our code conventions.

We needed another layer or step, something that connects AI output directly to our component library and standards.

For now, I have created the final version in Figma for handoff. In parallel, I'm exploring ways to make this process more connected so that I can get closer to usable code in future experiments.

Final thoughts 💭

Was it perfect? No.

But it worked. And it saved a lot of time.

Using AI helped me move faster, get feedback sooner, and dynamically involve people in the process.

What worked:

Creating a structured documentation super fast

Defining a clear user flow in seconds

Developing a clickable prototype in minutes

Implementing feedback in real-time using prompts

What didn’t:

ChatGPT’s preview canvas failed

Gemini had bugs and errors (I thought I lost my entire work 😱)

The code wasn’t ready for production

I’ll keep experimenting and share more along the way.

If you’ve tried something similar, I’d love to hear how it went.

What worked for you? What didn’t? What tools are you using?

Thanks for reading! 🙃

Amazing!

Really love the image you generated, so clean!